Interrater Reliability of the Postoperative Epidural Fibrosis Classification: A Histopathologic Study in the Rat Model

Intra-Rater and Inter-Rater Reliability of a Medical Record Abstraction Study on Transition of Care after Childhood Cancer

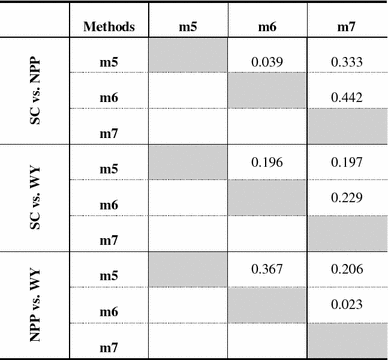

Table 4 | Spatially explicit quantification of the interactions among ecosystem services | SpringerLink

![Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh](https://datalabbd.com/wp-content/uploads/2019/06/15c-1.png)

Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh

Intra-Rater and Inter-Rater Reliability of a Medical Record Abstraction Study on Transition of Care after Childhood Cancer

Cohen's Kappa, Positive and Negative Agreement percentage between AT... | Download Scientific Diagram

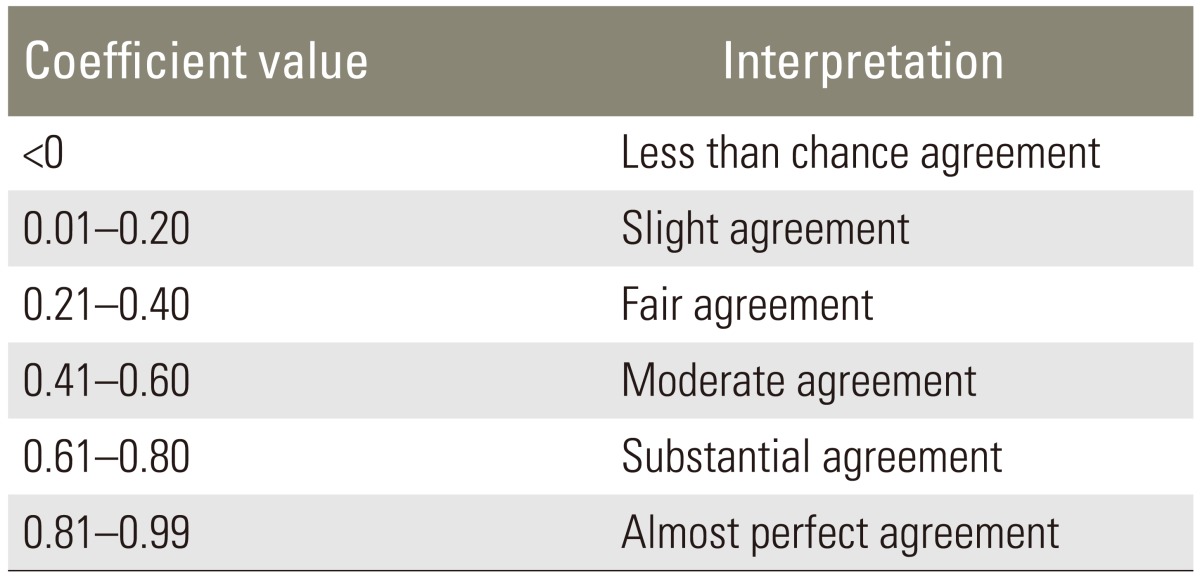

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

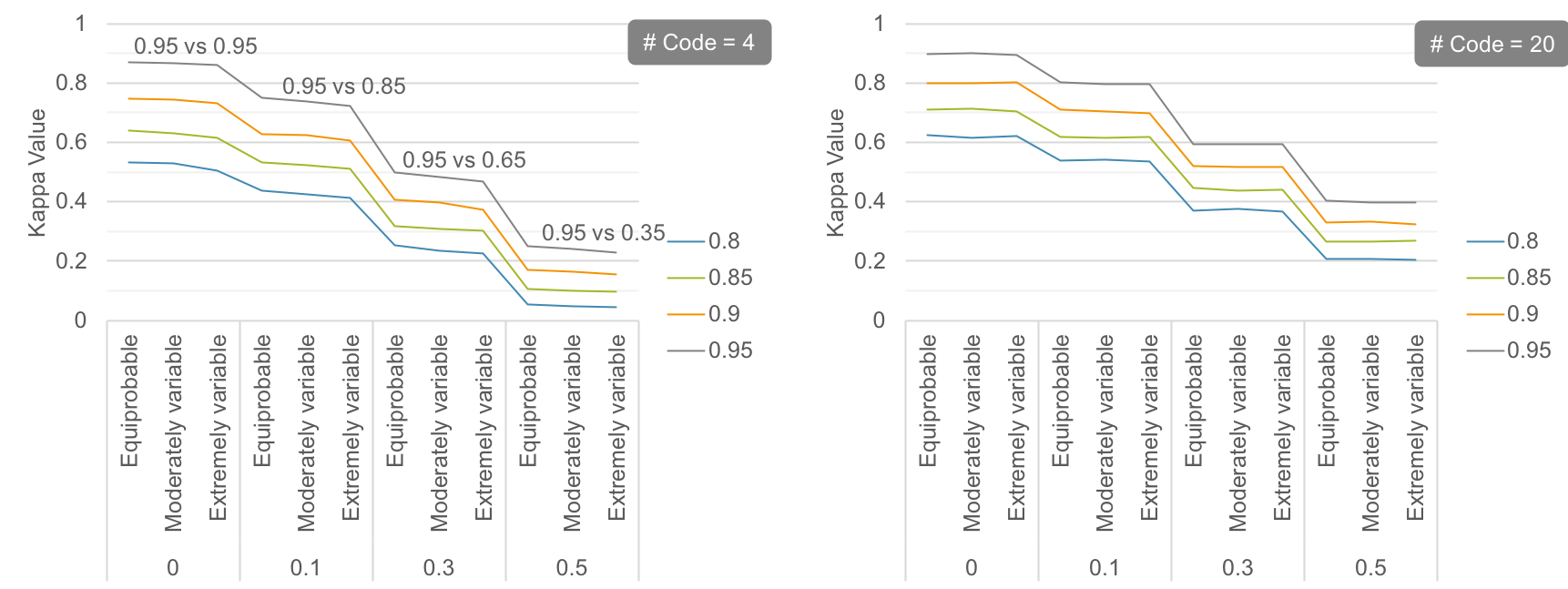

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table2-1.png)